IntellectAI · 2025–2026

Agent-native marketplace for enterprise AI

205,000 lines. Built solo.

The problem came from watching Purple Fabric get adopted. Business sponsors inside financial institutions wanted AI solutions for their departments. Domain experts (the people who actually knew the workflows) could see exactly what needed automating. The platform had no good way for them to build themselves, and no good way to find people who could build for them. No way for builders to share what they'd already made, and no community layer at all. Every agent existed in isolation. Every wheel got reinvented.

That's the gap this platform was built to close.

Builders compose multi-agent workflows on a drag-and-drop canvas. Agent steps are nodes; dependencies are edges.

AI Chef sits alongside as an AI co-pilot: a 1,700-line agentic orchestrator that suggests the next step, flags gaps, and researches external APIs in real time.

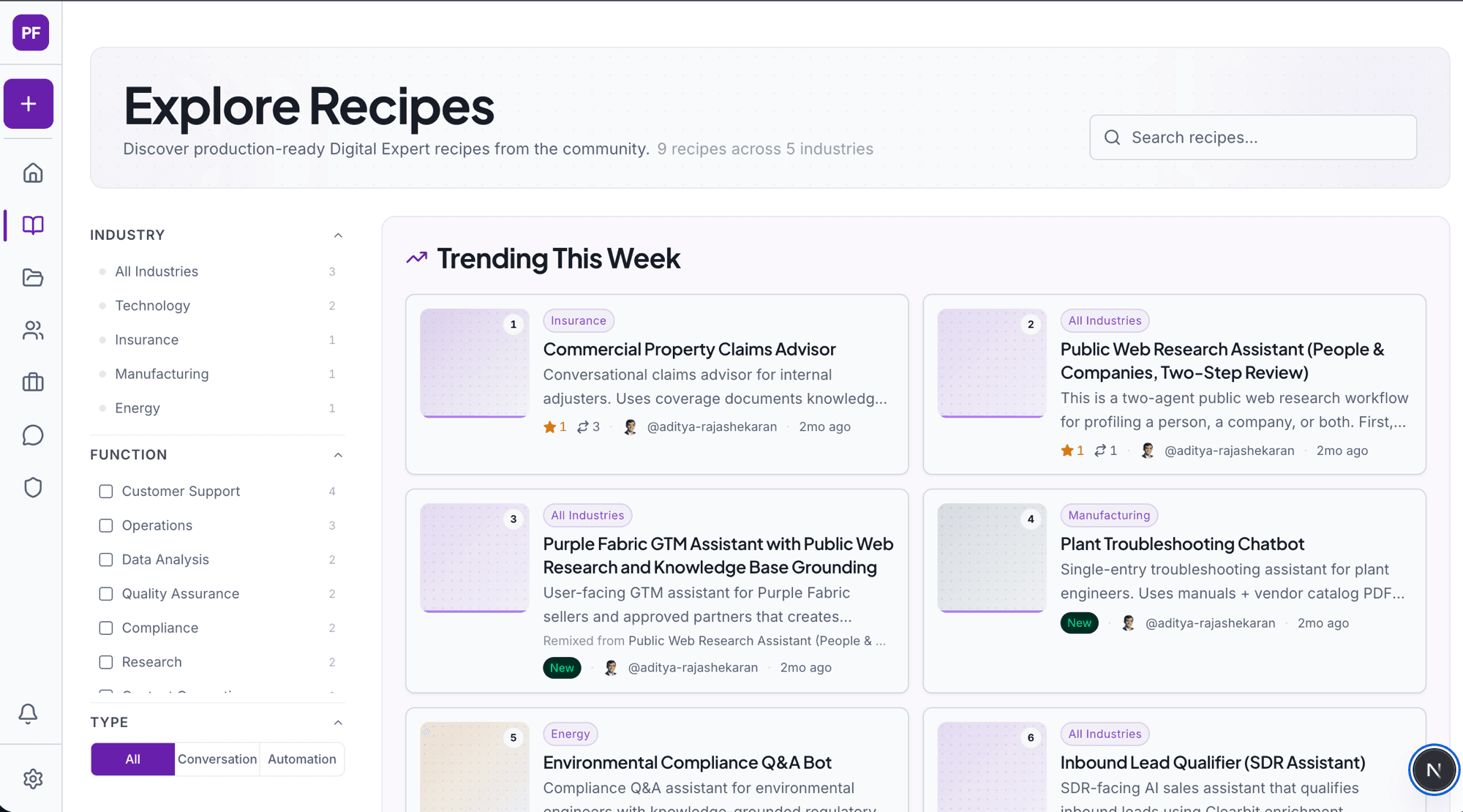

Finished recipes publish to the community marketplace, organised by industry and function, with social proof signals designed specifically for the evaluators who matter: "Works In My Org" carries more weight than a star rating when a department head is deciding what to take to production.

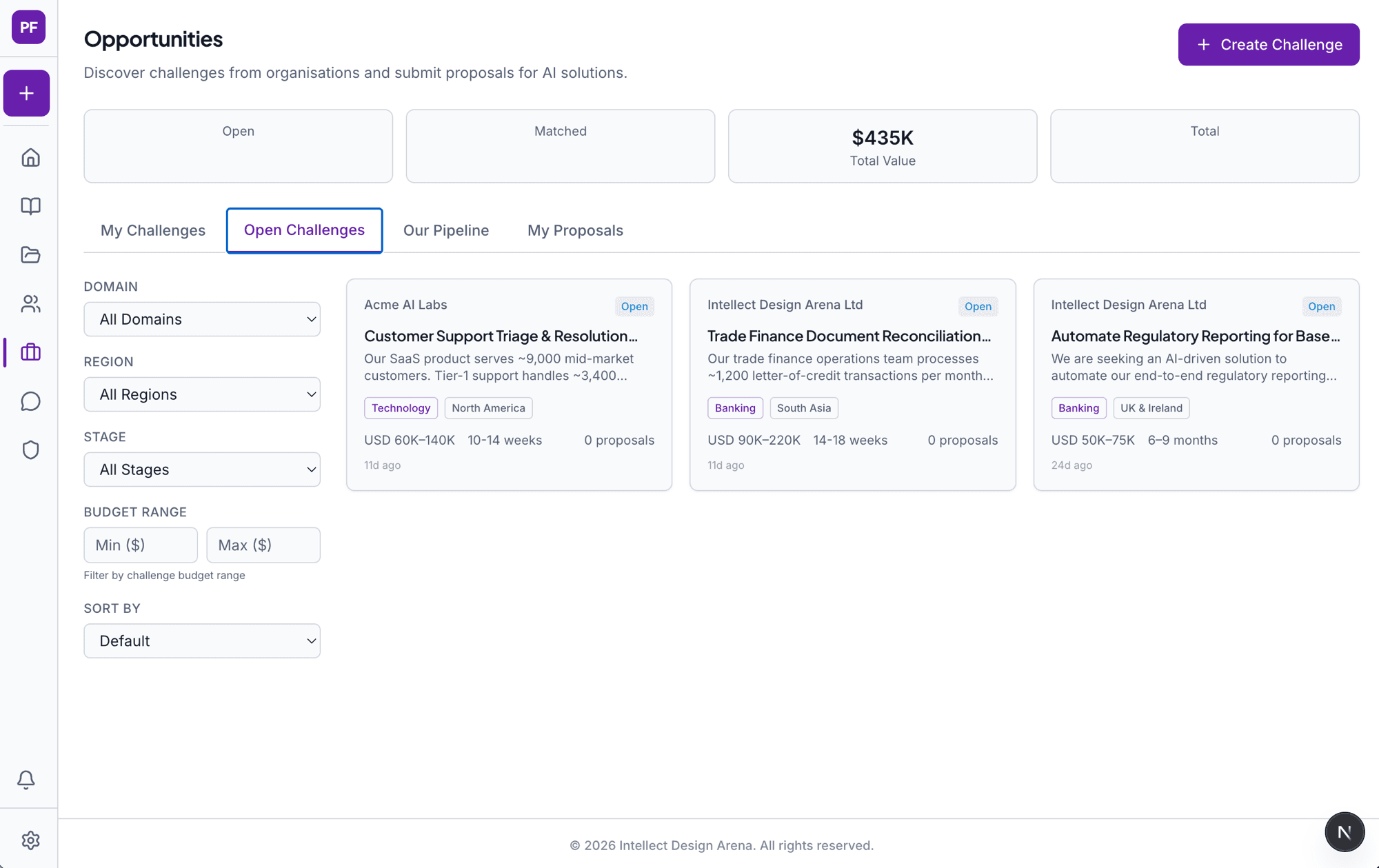

On the other side of the platform, business sponsors post challenges with budgets and timelines. Builders respond with proposals. The screenshot below shows three open challenges totalling $435K in value.

Built for a world where agents are users

The next shift is already in motion. OpenClaw agents autonomously browse and execute tasks across software platforms. Moltbook (a Reddit-style community of 2.8 million AI agents, acquired by Meta in March 2026) is the clearest signal yet that AI agents are becoming users of products in their own right, not just assistants helping humans navigate them. This platform was built with that in mind from day one.

Every action available in the UI has a corresponding API endpoint. Creating a recipe, forking it, posting a challenge, submitting a proposal: all of it is programmatically accessible with the same fidelity as the browser interface. A human with a mouse and an AI agent with an API key can do exactly the same things.

That's an architectural decision, not a feature. Retrofitting API access onto a UI-first product is expensive and almost always incomplete. Building API-first means the platform is ready for autonomous agents now, not when it becomes urgent to add them.

What building this taught me

Before I wrote a line of code, I spent weeks on the six people who would use it, each backed by academic research, behavioural psychology, and real market data. That groundwork paid back every time a product decision came up. The seven-stage challenge lifecycle exists because the Business Sponsor persona research showed that decision confidence is built through visible progress, not a submit button followed by silence. The four reaction types exist because "Works In My Org" is a different trust signal from "Star." Specificity at the research stage makes every subsequent decision derivable rather than argued.

The other thing I learned the hard way: when building with AI, write the tests first. My early attempts to have AI build the orchestration logic directly kept producing code that was wrong in quiet ways: a branch condition off, a recovery loop skipping a case. The fix was to write tests for every decision point first, then build the implementation against those tests. Once the tests existed as a specification, the AI-generated code was consistently correct. Without them, the model optimised for plausible-looking rather than correct. That's the most generalisable thing I took from this build.

The meta-proof

My degree is in Economics with a Film minor. I have an MBA from Oxford. I am not a software engineer.

This platform is the living proof of what I've been arguing from stages at GDS Charlotte, at the AI in Financial Advice Conference, and in every Purple Fabric bootcamp I've run: that AI enables domain experts to build enterprise-grade systems. I'm not evangelising the idea. I am the case study.

Closest market comparable is Distyl AI at a $1.8B valuation. It's an internal product at IntellectAI and is not yet public. Private case study available on request.